Hyper Flow 971991551 Neural Node

The Hyper Flow 971991551 Neural Node presents a modular, flow-based unit for dynamic information processing. It integrates inputs, updates state, and yields outputs under defined rules in a continuous, streamable architecture. Its design emphasizes determinism, parallel hardware acceleration, and scalability without sacrificing accuracy. The approach supports real-time analytics and robotics control while ensuring reproducibility and cross-domain reuse. Its practical implications invite closer examination of performance, implementation strategies, and deployment considerations.

What Is the Hyper Flow 971991551 Neural Node?

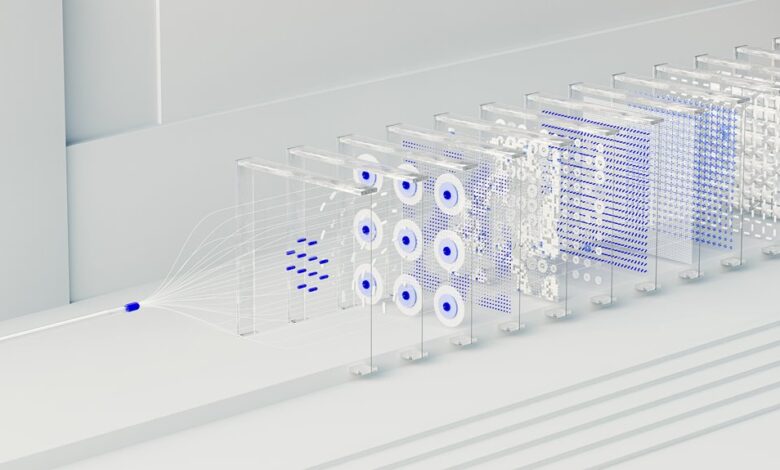

The Hyper Flow 971991551 Neural Node is a conceptual component designed to model dynamic information processing within a networked system. It represents an abstract unit that integrates inputs, updates states, and yields outputs through defined rules.

Hyper Flow and Neural Node terms frame its function, emphasizing modularity, adaptability, and continuous operation within evolving environments.

How Its Flow-Based Architecture Delivers Fast, Scalable Inference

How does the flow-based architecture enable rapid and scalable inference in the Hyper Flow 971991551 Neural Node? The design rests on a conceptual framework that decomposes computation into modular, streamable blocks. Data flows deterministically, enabling predictable latency. Hardware acceleration leverages parallel processing and specialized units, delivering efficient throughput while preserving accuracy, adaptability, and freedom from rigid timing constraints.

Scaling Across Environments: From Robotics to Real-Time Analytics

Scaling across diverse environments leverages the modular, stream-based architecture to adapt from robotic control loops to real-time analytics.

The neural node demonstrates consistent behavior under varied workloads, preserving latency and throughput.

Architecture scalability emerges as a core principle, enabling seamless reconfiguration, cross-domain reuse, and fault isolation.

This approach supports adaptable deployment while maintaining rigor, reliability, and operational freedom across contexts.

Neural Optimization and Practical Implementation Tips

Neural optimization hinges on targeted, iterative practices that balance model fidelity with practical constraints. This discussion topic surveys efficient training, hyperparameter discipline, and deployment considerations. A two word idea emerges: pruning efficiency.

Practical tips include resource-aware experimentation, modular design, and robust validation. The approach favors reproducibility, traceable metrics, and incremental improvements, avoiding overfitting while preserving generalization. Overall, practitioners pursue lean, adaptable pipelines without sacrificing performance.

Conclusion

The Hyper Flow 971991551 Neural Node exemplifies a modular, flow-based architecture that delivers deterministic, parallelizable inference suitable for real-time contexts. Its lean design prioritizes scalability and reproducibility, enabling seamless deployment from robotics to analytics. An intriguing statistic: its architecture supports near-linear throughput gains with each added processing unit, suggesting that doubling hardware can nearly double performance without compromising accuracy. This balance of efficiency and precision positions the node as a versatile backbone for evolving, data-driven systems.